Ofir Bibi

VP Research · Lightricks

// Poke, tinker, prod and tweak. Repeat.

Building the foundation of generative media — from efficient open-source models to production systems reaching millions of creators worldwide.

VP Research · Lightricks

// Poke, tinker, prod and tweak. Repeat.

Building the foundation of generative media — from efficient open-source models to production systems reaching millions of creators worldwide.

Ofir Bibi is the VP Research at Lightricks, where he leads the development of generative video models and applications. His team built LTX-2, the first open-source audio-video foundation model, and now focuses on the application layers that bring it to market.

Ofir drives both technical innovation and open-source community strategy, bridging fundamental ML research with practical product deployment. Born and raised in Jerusalem, he's always been a tinkerer — taking apart radios and asking how things work. That instinct now drives some of the most efficient video generation models ever built.

From the world's first real-time open-source video model to a complete audio-video foundation model — a two-year sprint to the frontier of generative media.

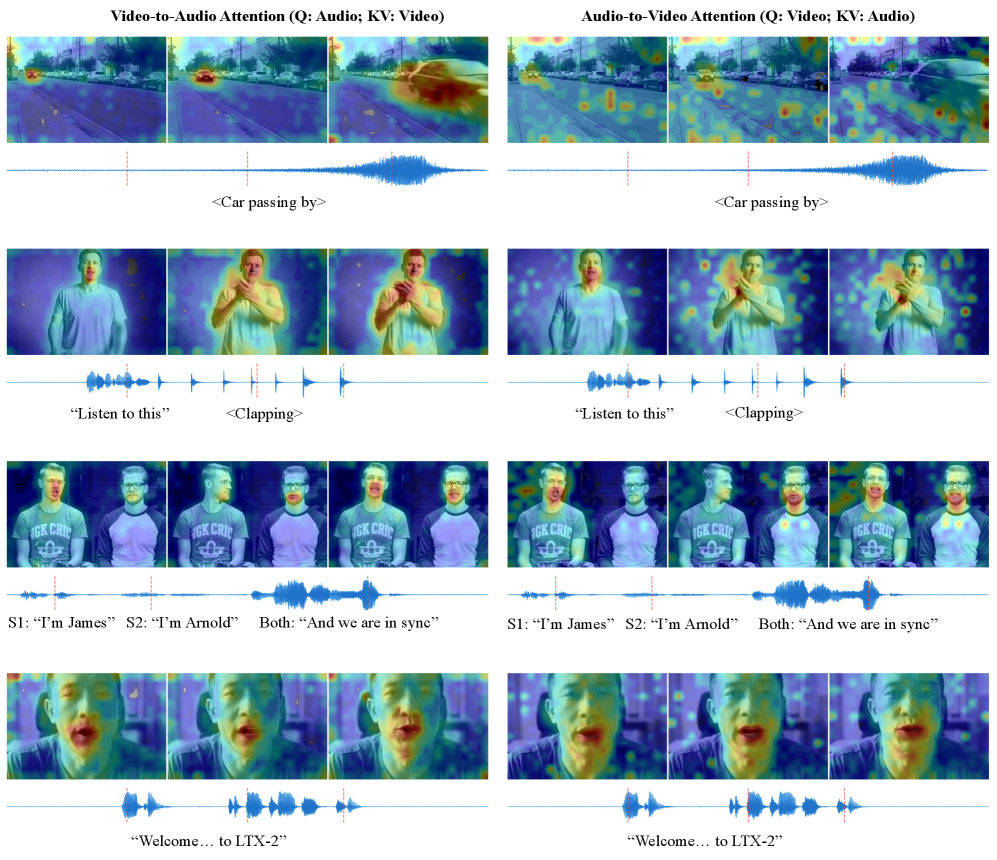

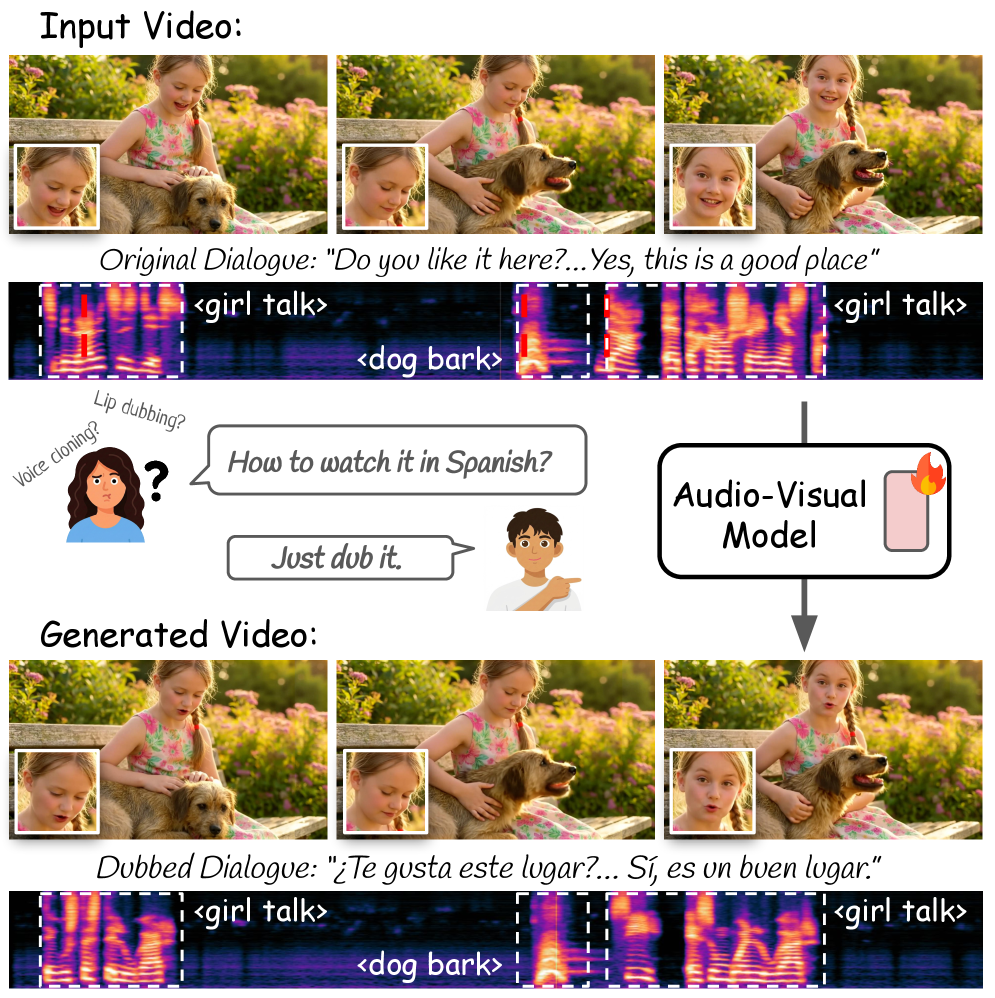

First open-source model to generate synchronized 4K audio and video

Announced with NVIDIA at CES 2026, LTX-2 was the first production-ready model to combine native audio and video generation with fully open weights, training code, and inference code — a milestone the industry called Lightricks' "DeepSeek moment." In human preference studies it performs comparably to Sora 2 and Veo 3, while running at 18× the speed of comparable open models. For the first time, anyone could train their own audio-visual IP directly into a foundation model.

Made high-quality AI video accessible on consumer hardware

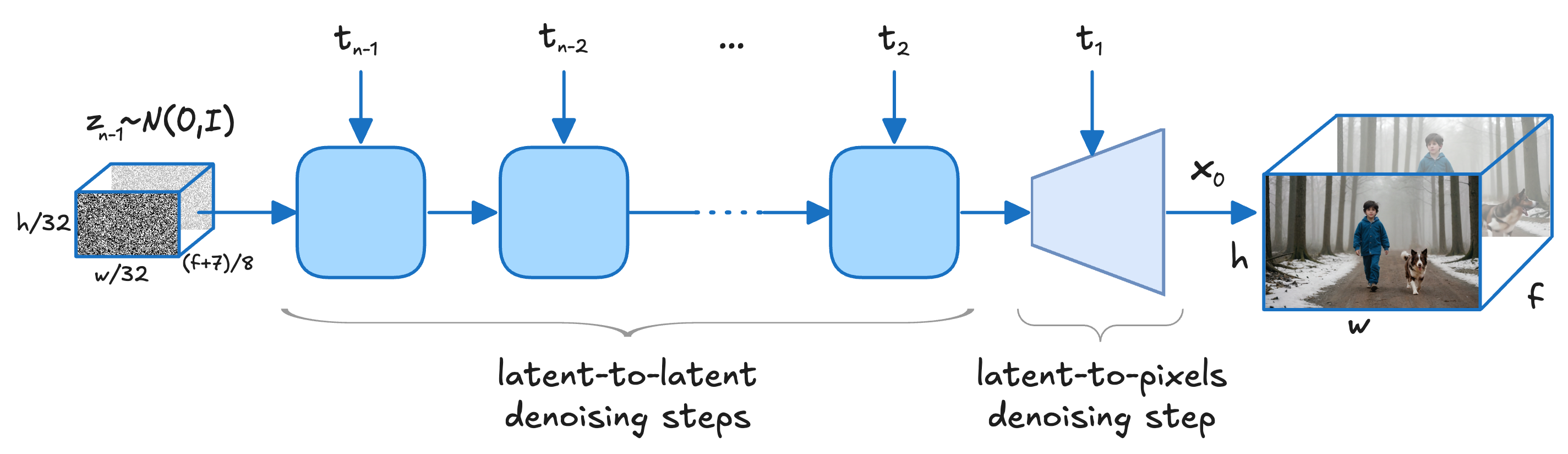

The 13B model introduced multiscale rendering — drafting motion coarsely first, then progressively refining detail — achieving speeds 30× faster than competing models of comparable size. What previously required $10,000 enterprise GPUs now ran on a consumer RTX card: 37 seconds to generate what took rivals over 25 minutes.

First open-source video model to run faster than real-time

LTXV was the first open-source video model to generate a 5-second clip in under 4 seconds — faster than the video plays back. Released with full weights and training code, it seeded a global developer community that reached 9,300+ GitHub stars and integrations into ComfyUI, Diffusers, and major creative platforms. Training on Google Cloud TPUs cut a six-month development timeline to four.